Weekly Review: Training the Apprentice

The week's curated tutorials, tools, and news for developers learning to train the agent rather than approve its work

Altered Craft

Happy May the Fourth, and welcome back to Altered Craft’s weekly AI review for developers. Thanks for spending some of your week with /AC. One thread runs through this edition: the engineer’s role is shifting from writing code to training the apprentice. Karpathy names the discipline, Parsons argues senior engineers should move from approver to trainer, and pieces on prompts as artifacts, skill packs, and harness design all sketch what that practice looks like. The model is the apprentice, and the craft is the training.

TUTORIALS & CASE STUDIES

Karpathy: From Vibe Coding to Agentic Engineering

Watch time: 30 min

A year after coining “vibe coding,” Andrej Karpathy argues at Sequoia’s AI Ascent 2026 that agentic engineering is the serious discipline forming on top of it. He reframes LLMs as ghosts rather than animals, and explores Software 3.0 and verifiability limits.

The takeaway: Treat LLMs as jagged, statistical collaborators that demand taste and judgment, not autonomous coworkers you can hand the keys to. The work is verification, not delegation.

Structured Prompt-Driven Development: Treating Prompts as First-Class Artifacts

Estimated read time: 19 min

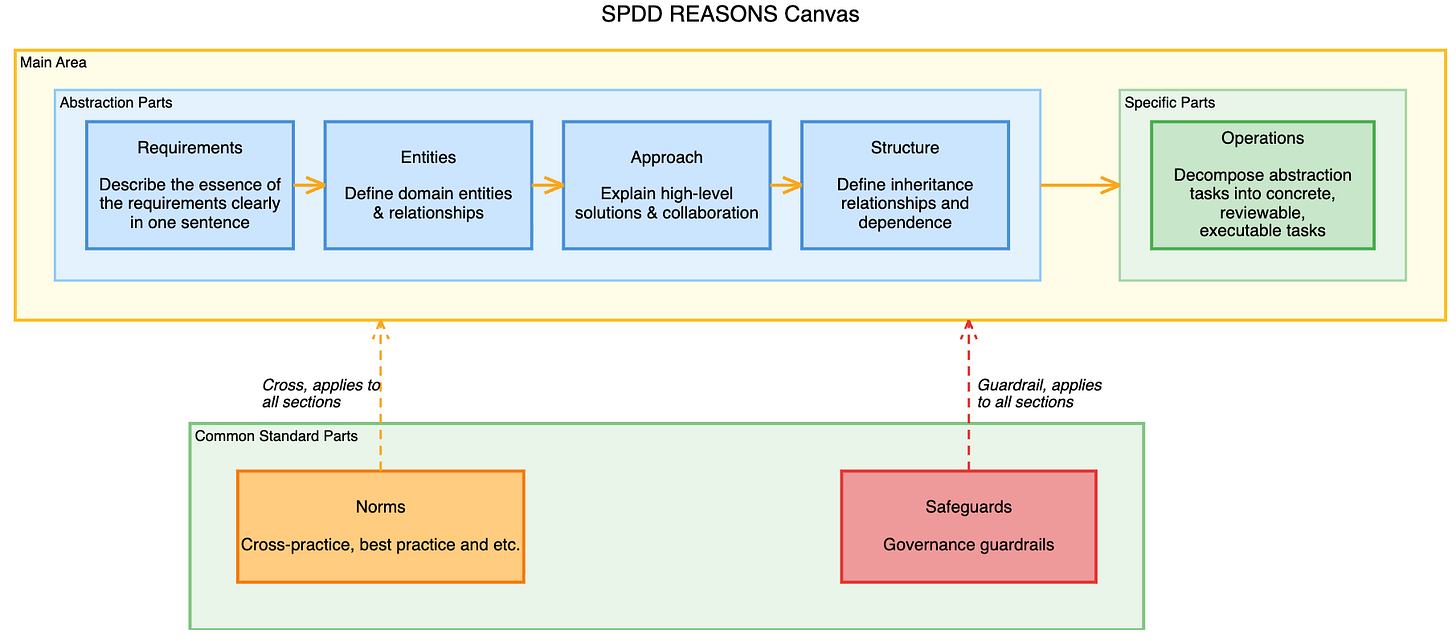

Putting that discipline into practice, Wei Zhang and Jessie Jie Xia of Thoughtworks introduce Structured Prompt-Driven Development, treating prompts as version-controlled, reviewable artifacts. The seven-part REASONS Canvas shapes intent before generation, and one rule anchors the workflow: when reality diverges, fix the prompt first, then update the code.

Key point: When AI-generated code diverges from intent, fix the prompt first, then regenerate the code, so prompts and implementation never silently drift apart.

Long-Running Agents: Beyond the Chat Window

Estimated read time: 9 min

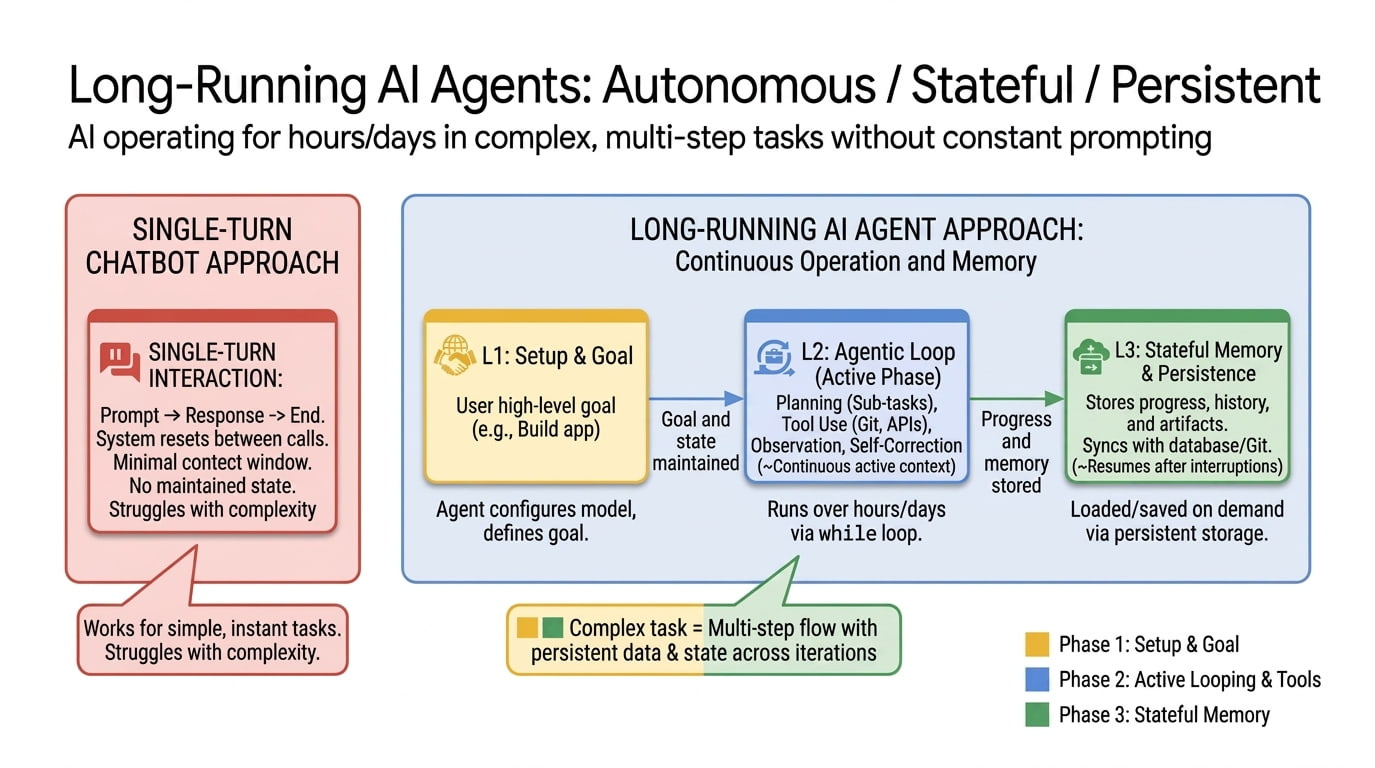

Extending agentic engineering past a single session, Addy Osmani maps how AI agents evolve from chat loops into systems working across days. He names the three walls every long-running agent hits: finite context, no persistent state, no self-verification. Anthropic, Cursor, and Google converge on similar answers.

What this enables: If you want agents that survive past a single session, push state out of the context window and into durable artifacts the next session can read.

RAG Isn’t Enough: Building the Missing Context Layer

Estimated read time: 11 min

Building on the context wall, when RAG breaks under multi-turn pressure, the failure isn’t retrieval, it’s what enters the context window. This walkthrough builds a context engineering pipeline in Python, covering hybrid retrieval, re-ranking, memory decay, compression, and token budgeting with measured benchmarks.

Why this matters: For multi-turn LLM systems, controlling what enters the context window matters more than improving retrieval quality. Engineering the context layer is the real leverage point.

MCP Tool Chains That Actually Finish

Estimated read time: 7 min

Shifting from context to tool design, Rui Carmo distills a year of MCP server work into design patterns for tool chains that don’t misfire. The core insight: models don’t plan, they walk breadcrumbs. Servers must make each next call obvious through consistent prefixes, embedded hints, and anchor-based addressing.

Worth noting: If your MCP tools force the model to guess what comes next, you’ve already lost. Bake the chain into naming, responses, and addressing.

When Evaluating AI Costs More Than Building It

Estimated read time: 10 min

Following our look at the AI evaluation stack[1] from last week, this Hugging Face analysis turns to the economics of evaluation, showing how it has crossed a cost threshold that locks out independent researchers. HAL spent $40,000 on agent rollouts, PaperBench runs hit $9,500 each, and evaluation compute now exceeds training compute in some domains.

The context: If you’re benchmarking agents, scaffold choice and reliability reruns drive cost more than model selection, so budget for the multiplier before the model.

[1] The AI Evaluation Stack: Beyond Vibe Checks for Production LLMs

TOOLS

Warp Goes Open Source with an Agent-First Contribution Model

Estimated read time: 7 min

Warp’s client is now open source under AGPL, with OpenAI as founding sponsor. The notable shift is the contribution model: humans supervise fleets of agents that handle implementation via Warp’s Oz platform. Kimi, MiniMax, and Qwen support also lands.

Why now: The bottleneck in software development is shifting from writing code to specifying intent and verifying agent output, and Warp is restructuring its project around that shift.

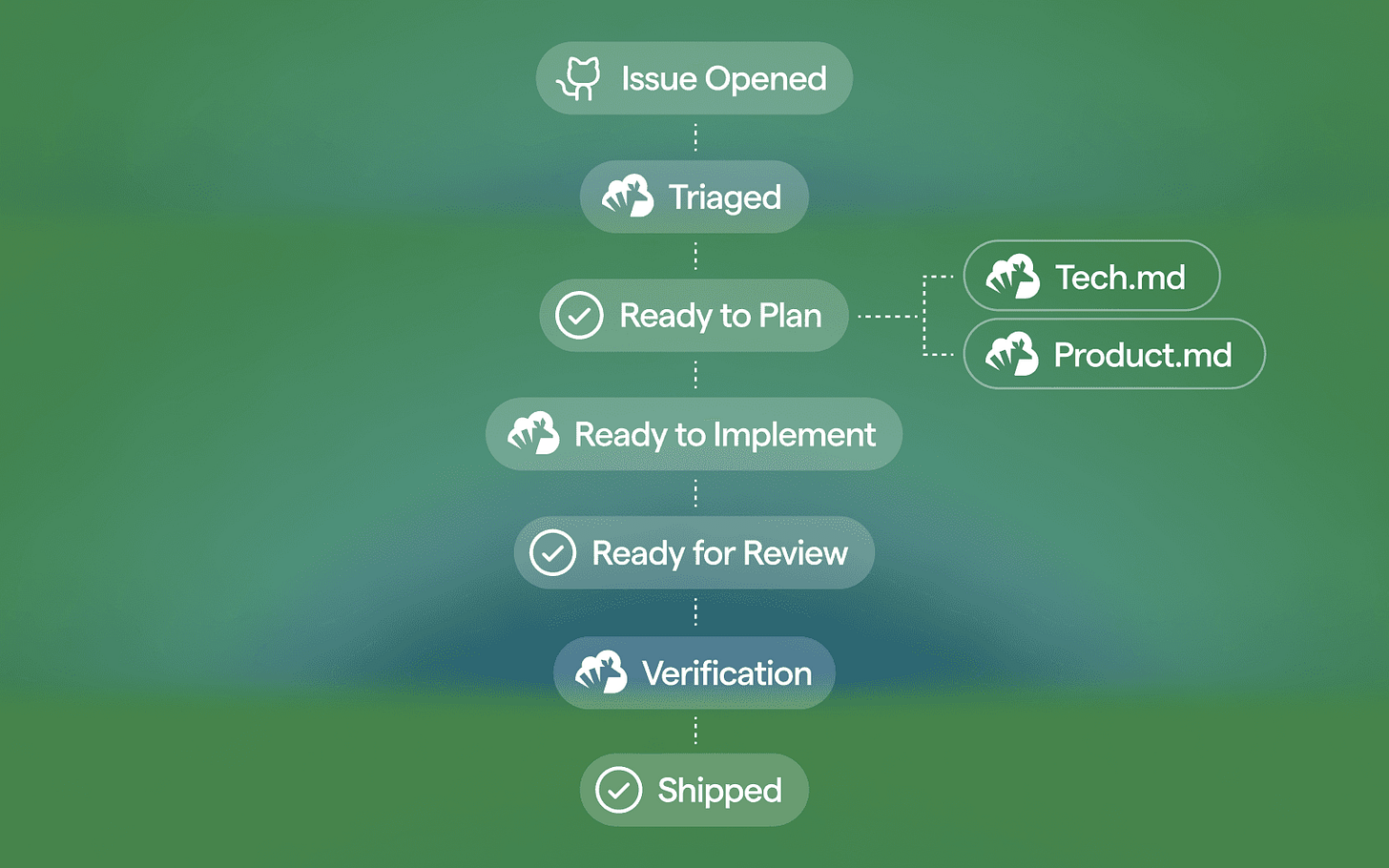

Agent Skills: Production-Grade Workflows for AI Coding Agents

Estimated read time: 8 min

Complementing our coverage of Garry Tan’s skillify approach[1] from last week, Addy Osmani’s open-source pack ships 20 structured skills across the development lifecycle, built around anti-rationalization tables and verification gates. It encodes practices from Software Engineering at Google and works with Claude Code, Cursor, Gemini CLI, and Copilot.

The opportunity: If your AI agent keeps skipping specs, tests, and reviews, drop in opinionated skill files that force it to follow a senior engineer’s workflow.

[1] Skillify: Turning Agent Failures Into Permanent Fixes

NVIDIA’s Nemotron 3 Nano Omni: One Model for Docs, Video, Audio, and GUIs

Estimated read time: 9 min

Shifting from agent harness to the models themselves, NVIDIA releases Nemotron 3 Nano Omni, a 30B-A3B model unifying text, images, video, and native audio in one sequence. Its hybrid Mamba-Transformer-MoE backbone handles 100+ page documents, narrated video, and GUI screenshots with up to 9x throughput gains.

What’s interesting: If your workflow mixes documents, screen recordings, and audio, a single open-weights model can now reason across all of them without stitched pipelines.

Eden AI: A European Unified API for AI Models

Estimated read time: 1 min

Also in the model access space, Eden AI provides a single unified API for LLMs and expert AI models like OCR, speech, and vision. The European platform adds smart routing, automatic fallbacks, and region-based model selection, positioning itself as an alternative to OpenRouter.

When this fits: If vendor lock-in or regional compliance is a concern, Eden AI is worth evaluating as a European-based aggregator for AI model access.

NEWS & EDITORIALS

This week’s editorials sharpen the engineer’s role, then close on the geopolitical layer reshaping which tools and talent are reachable.

AI Should Elevate Your Thinking, Not Replace It

Estimated read time: 9 min

Koshy John argues engineers are splitting into two camps: those using AI to sharpen judgment and those using it to simulate competence without building it. Outsourcing reasoning skips the friction that forges instinct and taste, with serious implications for early-career engineers.

The principle: Delegate the mechanical work to AI, but own the reasoning, because judgment is built through friction and cannot be outsourced without atrophying.

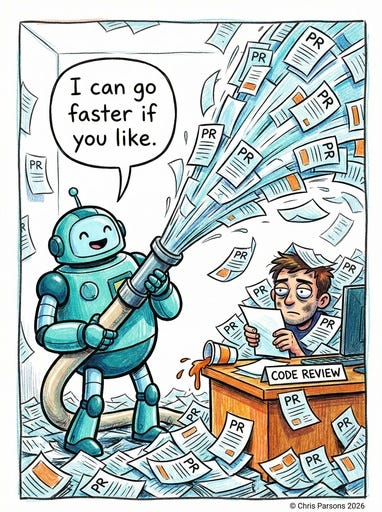

Coding With AI in 2026: From Approver to Trainer

Estimated read time: 12 min

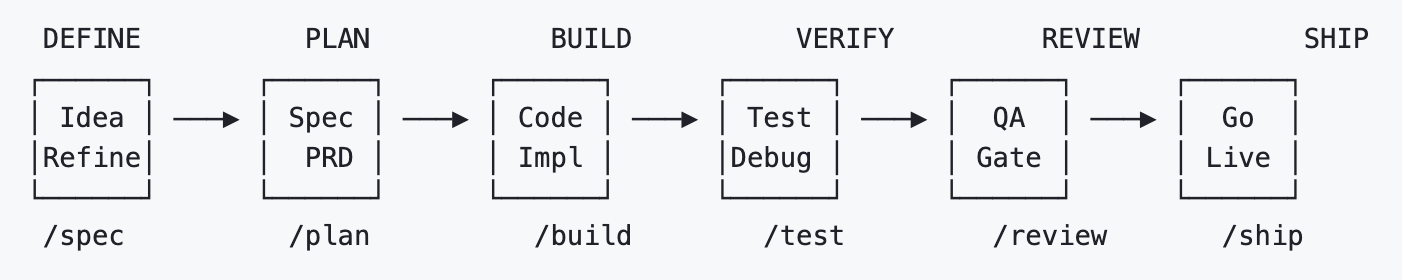

Building on that judgment theme, Chris Parsons argues the serious AI coding work has moved from the IDE to the command line, and that senior engineers should train the agent rather than review every diff. He makes the case for harness over prompts, and specifying the problem, not the solution.

Where to invest: Invest in the harness around your agent, including CLAUDE.md, skill files, and feedback loops. This is what Karpathy meant by agentic engineering as a discipline; the wrapper is the work.

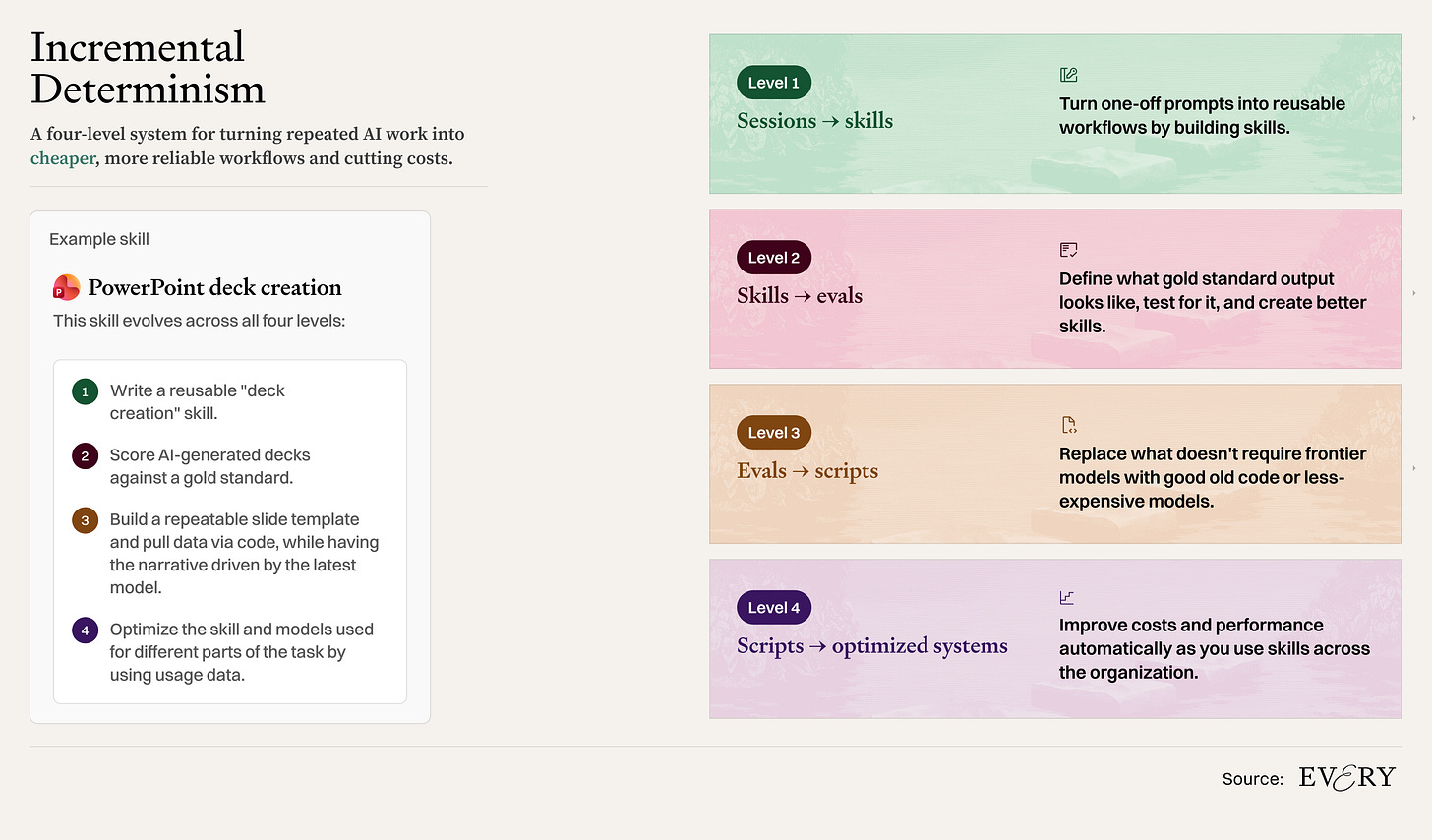

You Are the Most Expensive Model

Estimated read time: 6 min

Taking this further on the practical side, Mike Taylor argues routing every task through frontier models wastes money and human attention. He introduces incremental determinism, a method for turning repeated AI sessions into reusable skill files that offload work to cheaper subagents.

Practical tip: If you’ve done a task with AI three times, turn it into a skill file backed by a cheaper subagent. Osmani’s skill pack above shows what those files look like at scale.

China Blocks Meta’s $2B Manus Deal as AI Decoupling Accelerates

Estimated read time: 6 min

Shifting from individual practice to the geopolitical layer, China has blocked Meta’s reported $2 billion acquisition of AI agent startup Manus, showing how quickly U.S. and Chinese AI ecosystems are decoupling. Chinese founders now face a bind: stay home and lose U.S. capital, or redomicile and invite Beijing’s scrutiny.

Heads up: Factor geopolitical risk into AI vendor decisions, because the tools and talent you rely on are increasingly shaped by export controls and investment bans.

That’s the week. The shared thread across these pieces is small but durable: the engineer’s craft is moving from writing code to training the agent that writes it. May the harness be with you 😉, and see you next Monday.