Weekly Review: Tighten the Loop

This week's curated tutorials, tools, and news on building better feedback loops for AI development

Happy Monday from Altered Craft’s weekly AI review for developers. We appreciate you starting your week here. This edition is about tighter feedback loops: Anthropic publishes a harness that separates code generation from evaluation, Cloudflare and Stanford ship agent sandboxes, and open-weight models quietly close the gap on frontier performance. New research also finds that as agents get faster, we become the bottleneck.

TUTORIALS & CASE STUDIES

Solve by Default: Practical Patterns for Going AI-Native Beyond Just Code

Estimated read time: 8 min

Scott Berkowitz details solving by default, using genAI to tackle problems engineers historically skip. Patterns include turning meeting notes into PRDs, converting Slack threads into issues, and dispatching background agents for pairing-session micro-annoyances.

The opportunity: Most engineering friction isn’t in the code itself. This reframes AI as a tool for the invisible overhead, the meetings, threads, and handoffs, that quietly drains team momentum between commits.

You’re Already Building DSPy, Just Worse

Estimated read time: 10 min

Taking this AI-native mindset from ad-hoc patterns to systematic architecture, Skylar Payne argues teams inevitably reinvent the patterns DSPy already packages. A side-by-side comparison and practical checklist help you adopt the core benefits without the framework.

Why this matters: Whether you adopt DSPy or not, its core patterns (typed I/O, composable modules, eval infrastructure) represent where production AI systems converge. Learning them now saves painful refactors later.

Anthropic’s GAN-Inspired Multi-Agent Harness for Long-Running Autonomous Coding

Estimated read time: 18 min

Moving from framework patterns to agent orchestration, Anthropic details a three-agent architecture for multi-hour autonomous coding. The breakthrough: separating generation from evaluation proves far more tractable than self-critique, with calibrated evaluators turning subjective quality into actionable feedback.

Key point: If your AI coding agent praises its own mediocre work, this GAN-inspired generation/evaluation split gives you a concrete, battle-tested architecture for making quality feedback actionable.

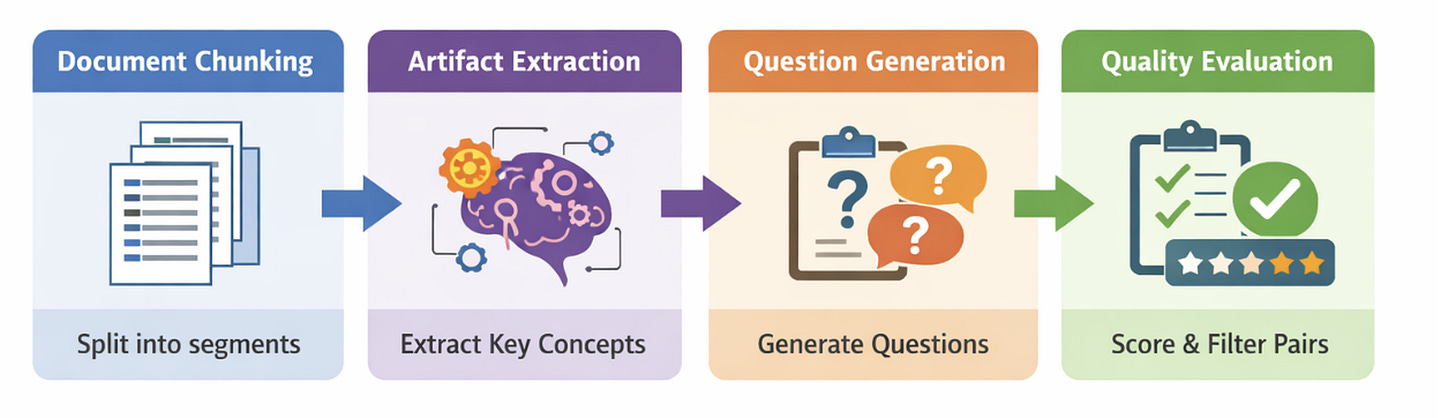

Fine-Tune Domain-Specific Embeddings for RAG on a Single GPU

Estimated read time: 12 min

Shifting from agent architecture to retrieval, NVIDIA releases an end-to-end recipe for fine-tuning embedding models for domain-specific RAG using synthetic data and hard negative mining on a single GPU. No manual labeling needed. Atlassian saw 26% recall improvement.

What this enables: You can meaningfully improve RAG retrieval using LLM-generated synthetic data from your own docs. No labeled dataset, no GPU cluster, just one card and your domain knowledge.

TOOLS

Ossature: An Open-Source Harness for Spec-Driven Code Generation

Estimated read time: 11 min

Ossature tackles the gap between LLMs generating functions well and producing coherent projects. Its spec-driven harness validates, audits, and builds code one task at a time, giving each task narrow context with built-in verification loops.

The takeaway: If AI-generated code works in isolation but falls apart at module boundaries, spec-driven generation with narrow context and verification loops is a practical pattern worth exploring.

Awesome CursorRules: A Curated Collection of .cursorrules for Every Stack

Estimated read time: 4 min

Also in the AI code generation space, this community repository collects hundreds of .cursorrules configuration files spanning frontend, backend, mobile, and testing stacks. Each provides project-specific instructions that tailor Cursor AI’s output to particular frameworks.

Worth noting: Instead of writing .cursorrules from scratch, grab a template and customize it. The patterns here apply to any AI coding tool that accepts project-level instructions.

Cloudflare’s Dynamic Worker Loader: Isolate-Based Sandboxes That Are 100x Faster Than Containers

Estimated read time: 11 min

Once AI generates the code, you need somewhere safe to run it. Following our look at NVIDIA’s kernel-level OpenShell runtime[1] last week, Cloudflare takes a lighter approach. Dynamic Worker Loader moves into open beta with V8 isolate sandboxes that boot in milliseconds. Roughly 100x faster than containers, with no concurrency limits and a decade of security hardening.

Why now: As agents increasingly generate and execute their own code, the demand for lightweight, production-grade sandboxes is accelerating. Cloudflare’s isolate approach fills a real gap in the agent infrastructure stack.

[1] NVIDIA OpenShell: A Sandboxed Runtime for AI Agents That Actually Need Access

jai: A Lightweight Sandbox to Stop AI Agents From Wrecking Your Home Directory

Estimated read time: 3 min

For local development, Stanford releases jai, a free tool that sandboxes AI coding agents with a single command. Copy-on-write overlays protect your home directory while keeping the working directory writable. Three isolation modes fill the gap between raw shell access and containerization.

Practical tip: If you’re running agents like Codex or Claude Code against your local filesystem, prefix the command with jai to contain the blast radius without Docker or VM overhead.

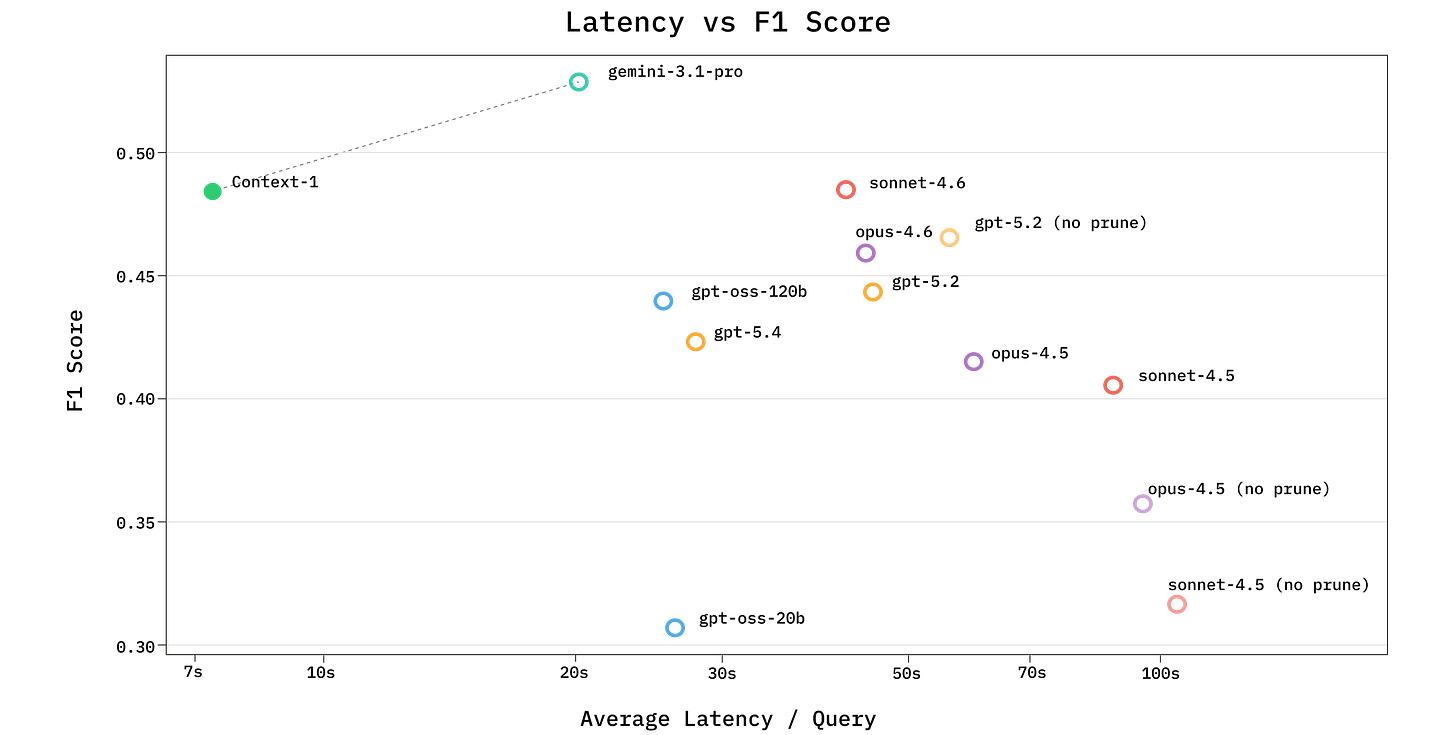

Chroma’s Context-1: A 20B Model That Matches Frontier LLMs at Agentic Search

Estimated read time: 14 min

Chroma releases Context-1, a 20B-parameter model purpose-trained for multi-hop retrieval. Its core innovation is self-editing context management, where the agent selectively discards irrelevant documents during search to prevent context rot, matching frontier LLMs at up to 10x the speed.

What’s interesting: A purpose-trained 20B model matching frontier search agents at dramatically lower cost and latency opens multi-hop RAG to teams that previously couldn’t justify frontier API budgets.

Mistral Releases Voxtral TTS: A 4B Parameter Multilingual Text-to-Speech Model

Estimated read time: 5 min

Mistral launches Voxtral TTS, a 4B parameter model with emotionally expressive speech across 9 languages. It achieves 70ms latency, adapts to custom voices from 3 seconds of audio, and supports zero-shot cross-lingual generation.

The highlight: Available via API or as open weights, this gives voice agent builders a production-ready option with custom voice cloning from just 3 seconds of reference audio.

NEWS & EDITORIALS

State of the Tech Job Market: PM Roles Hit 3-Year High While Design Stalls

Estimated read time: 7 min

Lenny Rachitsky’s biannual analysis finds PM openings at a three-year high, 67,000+ engineering roles globally, and AI positions hockey-sticking. Yet design roles have plateaued since early 2023, suggesting AI-accelerated engineering is compressing traditional design workflows.

The context: AI-specific roles are accelerating fastest, with a third of openings in the Bay Area and NYC emerging as the clear second hub. Worth tracking if you’re considering a move.

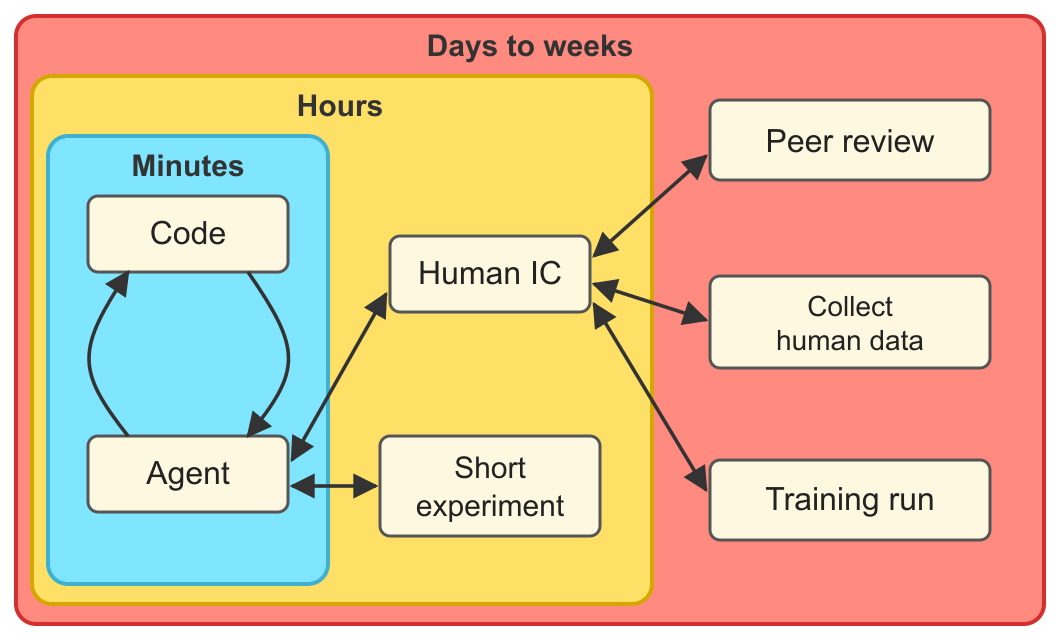

METR’s Tabletop Game Simulates Working with 200-Hour AI Agents, Finds Humans Become the Bottleneck

Estimated read time: 11 min

Adding depth to our coverage of DX’s ~10% productivity finding[1] from last week, METR researchers simulated work with 200-hour AI agents. They found 3-5x uplift, but human review, feedback, and prioritization become the dominant serial bottlenecks once execution is near-instant.

The signal: As agents handle more execution, the bottleneck shifts to human judgment. Designing workflows around faster review and prioritization will matter more than speeding up implementation.

[1] AI Productivity Gains Land at ~10%, Not the 2-3x Vendors Promised

MiniMax M2.7 vs. Claude Opus 4.6: 90% of the Quality at 7% of the Cost

Estimated read time: 7 min

Shifting from organizational dynamics to model economics, and following our discussion of MiniMax M2.7’s self-evolution capabilities[1] last week, Kilo Code independently tested the model against Claude Opus 4.6 on three TypeScript tasks. Both found every bug, but MiniMax delivered comparable detection at 7% of the cost, $0.27 versus $3.67. Claude’s fixes remain more thorough.

Worth watching: Open-weight models are closing the gap on detection tasks. Reserve frontier models for fixes that need depth, and you can cut AI coding costs dramatically on the rest.

[1] MiniMax M2.7: The Model That Helps Build Its Own Next Version

Inside Claude’s Mind: Anthropic’s Interpretability Research Reveals How LLMs Actually Think

Estimated read time: 11 min

Diving deeper into how these models actually work, Anthropic traced Claude’s internal computations and found surprising gaps between what the model reports and does. Claude invents its own algorithms, plans poetry ahead, and defaults to silence. Chain-of-thought traces can be post-hoc fabrications.

Learning opportunity: Understanding how models actually reason versus what they report in chain-of-thought helps you build more robust evaluation pipelines and set realistic expectations for AI-assisted workflows.