Weekly Review: Inside the Agent

The week's curated deep dives into agent architecture, agentic tooling, and the real engineering challenges underneath

Welcome back to Altered Craft’s weekly AI review for developers. We’re glad you’re here, and we don’t take your attention for granted. This week, the conversation shifts from whether to adopt agents to what’s actually inside them. You’ll find practitioners dissecting harness architecture, reimagining tool interfaces with Unix CLI, and building isolation infrastructure for production agent teams. On the editorial side, Ethan Mollick maps AI’s rolling disruption while two authors independently conclude that faster code generation is creating new forms of engineering complexity.

TUTORIALS & CASE STUDIES

What Makes AI Agents Actually Work

Estimated read time: 12 min

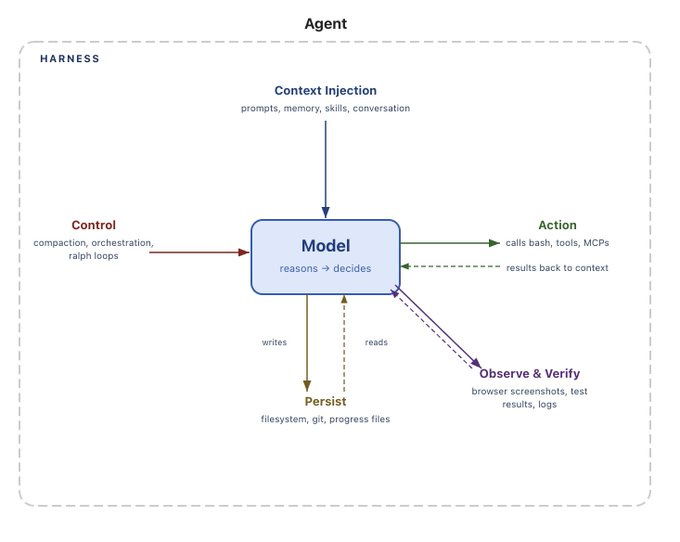

Building on our coverage of Morris’ “harness engineer” role concept[1] from last week, LangChain now formally defines Agent = Model + Harness, where the harness encompasses all code, configuration, and execution logic surrounding the model. This deep dive covers context compaction strategies, sandboxed execution, memory systems, and long-horizon patterns. As models improve, harness engineering remains essential.

[1] Positioning Humans in AI Development Loops

Why this matters: Understanding the harness concept helps you architect agent systems that stay robust as you swap underlying models, a critical skill as the landscape shifts rapidly.

Manus Lead Ditches Function Calling for Unix CLI

Estimated read time: 18 min

Extending our coverage of Holmes’ CLI-over-MCP argument[1] from last week, a former Manus backend lead takes the position even further: a single run(command="...") tool with Unix CLI commands outperforms function-calling catalogs entirely. CLI is the densest tool-use pattern in LLM training data, and progressive --help discovery guides agents without bloating system prompts.

[1] CLIs Beat MCP for AI Tool Integration

The takeaway: If you’re building agent tool interfaces, this battle-tested approach reduces tool selection complexity while leveraging what LLMs already know from training data. The production failure stories alone are worth the read.

How Claude’s Generative UI Actually Works Inside

Estimated read time: 10 min

Shifting from the tool layer to the presentation layer, Michael Livshits reverse-engineered Anthropic’s generative UI, revealing a show_widget tool call with progressive DOM rendering. He replicated the pattern for a CLI agent using DOM diffing with morphdom to achieve smooth streaming HTML updates.

What’s interesting: The architecture reveals that generative UI requires no specialized rendering engine, just smart HTML injection and DOM diffing. The extracted design system guidelines are a bonus for anyone building similar features.

OpenAI’s Social Engineering Approach to Agent Security

Estimated read time: 7 min

With agents gaining richer capabilities, security becomes critical. OpenAI reframes prompt injection as a social engineering problem. Their Safe Url detection constrains manipulation impact through source-sink analysis, flagging when conversation data would be transmitted to third parties, mirroring how organizations manage human agent risk.

Key point: Source-sink analysis is a practical framework you can apply today when designing agent permissions and data flow boundaries. As agents handle more sensitive tasks, this mental model becomes essential.

From Individual AI to Institutional Intelligence

Estimated read time: 9 min

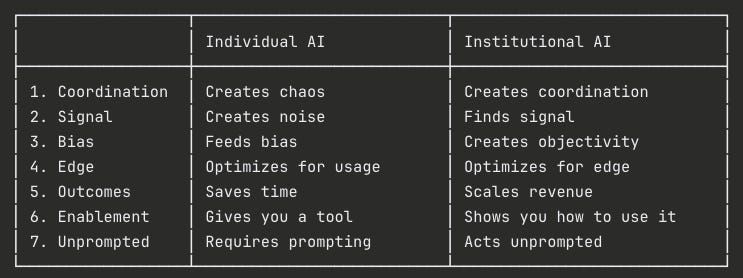

Zooming out from individual agent design, George Sivulka argues that organizations fail to capture AI’s 10x individual productivity gains without redesigning operations. His seven pillars of institutional intelligence cover coordination, signal detection, bias resistance, and unprompted AI action.

The opportunity: If you’re consulting or leading AI adoption, this framework gives you structured vocabulary for conversations about why individual productivity tools aren’t translating into organizational value.

TOOLS

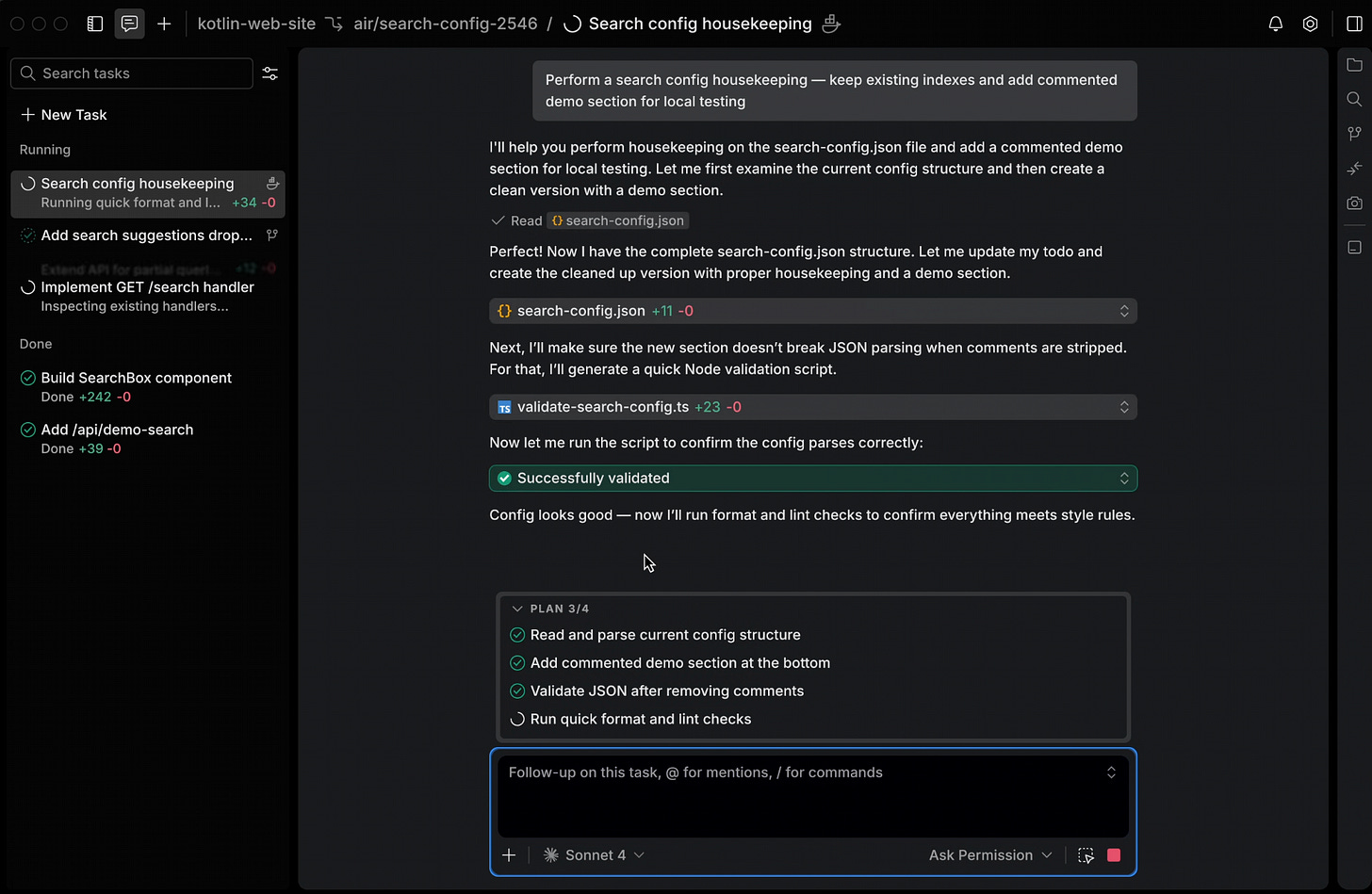

JetBrains Air Runs Multiple Coding Agents Together

Estimated read time: 4 min

JetBrains launches Air, an Agentic Development Environment where Codex, Claude Agent, Gemini CLI, and Junie execute independent task loops in parallel. Each agent runs in isolated Docker containers or Git worktrees. The desktop app provides code-aware task definition, concurrent progress monitoring, and language-aware review. macOS preview available now.

Why now: Agent orchestration environments are becoming a new product category. Air’s model-agnostic approach lets you compare agents side by side and match the right tool to each task.

gstack Turns Claude Code Into Expert Teams

Estimated read time: 5 min

Also in the agent orchestration space, YC’s Garry Tan created gstack: eight specialized skills for Claude Code enforcing distinct cognitive modes. Includes CEO-level planning, paranoid code review, diff-aware QA, and automated shipping with native browser testing and cookie import. Supports 10+ parallel sessions via Conductor.

Worth noting: The philosophy of explicit cognitive mode switching, where planning stays separate from review and shipping, is a workflow pattern worth adopting regardless of which agent tools you use.

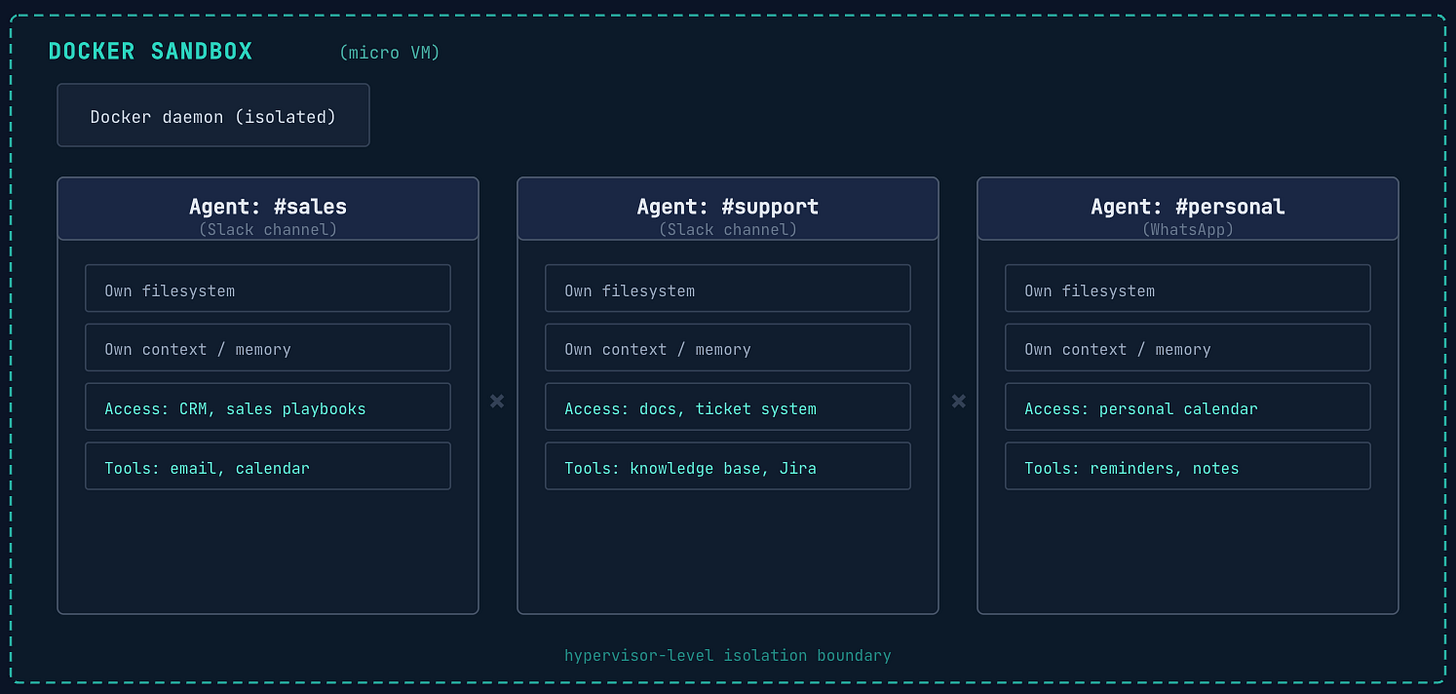

NanoClaw Partners with Docker for Agent Isolation

Estimated read time: 5 min

NanoClaw partners with Docker to run AI agents in isolated micro VMs with one command. Each agent gets its own kernel, filesystem, and daemon with hypervisor-level isolation and millisecond startup. The security model treats agents as potentially malicious actors, enforcing hard boundaries against cross-agent leakage.

The context: As agents gain access to credentials, filesystems, and APIs, the “design for distrust” principle becomes table stakes. This is the infrastructure layer that enterprise agent deployments will require.

GLM-5-Turbo Optimized for Agentic Task Execution

Estimated read time: 3 min

Shifting from agent infrastructure to new models, Z.AI releases GLM-5-Turbo with a 200K context window and 128K max output tokens. Features include enhanced function calling stability for multi-step tasks, context caching, structured output, and MCP integration, all optimized for agentic workflows.

What this enables: The 128K output token limit is notable. Combined with MCP integration and context caching, this model is designed from the ground up for long-running agent task chains.

NVIDIA’s Hybrid Mamba-Transformer for Agentic Reasoning

Estimated read time: 3 min

Also optimized for agentic workloads, NVIDIA releases Nemotron-3-Super-120B-A12B, an open hybrid Mamba-Transformer MoE model with a 1 million token context window. The 120B parameter model features configurable reasoning effort levels and is available through NVIDIA NIM with an OpenAI-compatible API.

What stands out: The hybrid Mamba-Transformer architecture offers a different efficiency tradeoff than pure transformer models. Configurable reasoning effort lets you dial cost vs. quality per request, a feature more models should adopt.

Penguin-VL Rethinks Vision Language Model Design

Estimated read time: 4 min

Tencent’s Penguin-VL initializes its vision encoder from a text-only LLM instead of CLIP, converting causal attention to bidirectional with 2D rotary positional embeddings. Available in 2B and 8B parameter sizes, it excels at OCR, document parsing, and complex visual reasoning with strong efficiency.

The approach: Starting a vision encoder from language model weights rather than image-pretrained ones is a counterintuitive design choice that pays off in document-heavy workflows where text understanding matters most.

Google’s First Natively Multimodal Embedding Model

Estimated read time: 5 min

Also advancing multimodal AI, Google releases Gemini Embedding 2, the first natively multimodal embedding model mapping text, images, video, audio, and documents into a single vector space. It supports 100+ languages with Matryoshka Representation Learning for flexible dimensions from 768 to 3072. Available through Gemini API and LangChain.

What this unlocks: A single embedding space for text, images, video, and audio simplifies RAG pipelines dramatically. No more separate encoders or complex fusion layers for multimodal retrieval.

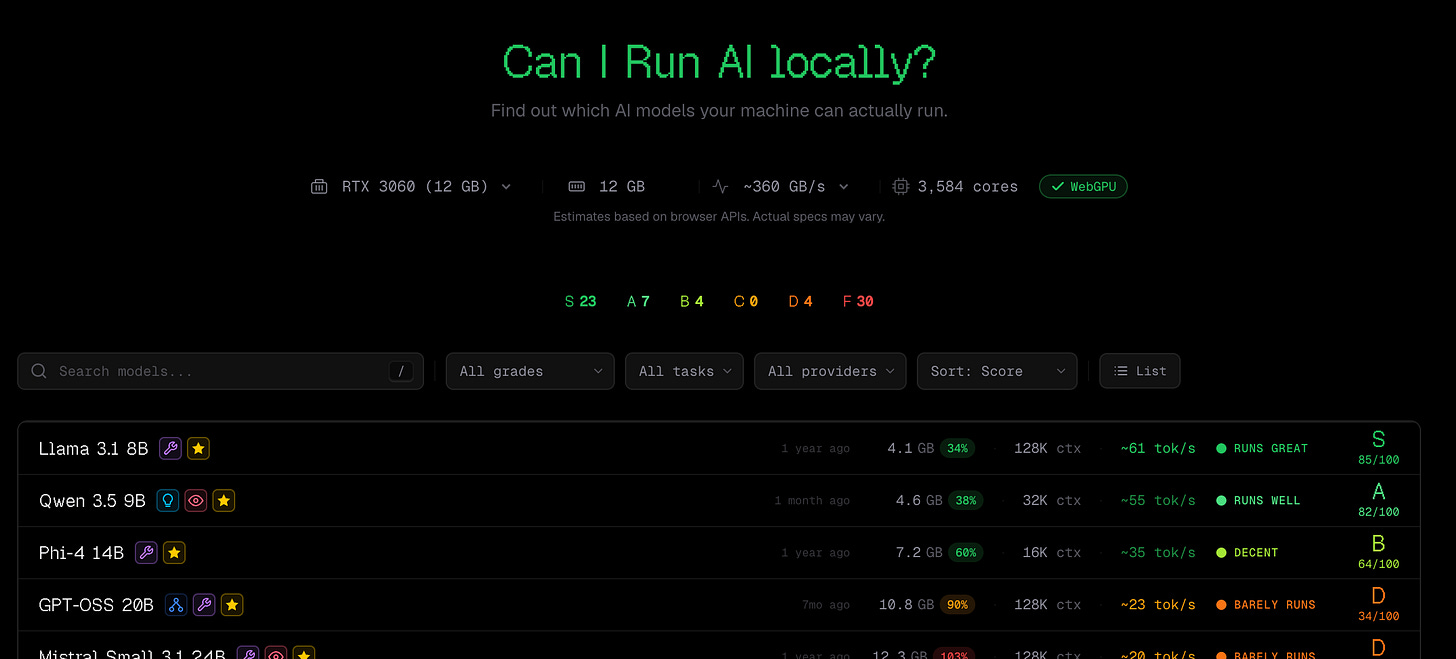

CanIRun.ai Matches Hardware to Local AI Models

Estimated read time: 2 min

For developers exploring which of these models to run locally, CanIRun.ai detects your GPU, CPU, and RAM via browser-based client-side processing and matches against a database of popular open-source models including Llama, Qwen, Mistral, DeepSeek, and Gemma, from 1B to 120B+ parameters.

Bookmark this: Eliminates the guesswork of checking VRAM requirements and quantization options across dozens of models. Save this for the next time you’re evaluating a local model setup.

NEWS & EDITORIALS

The Shape of AI’s Rolling Disruption

Estimated read time: 12 min

Ethan Mollick charts AI’s shift from human-AI collaboration to full AI management, where organizations mandate that code must not be written or reviewed by humans. He highlights recursive self-improvement as a key accelerator. Benchmarks show AI now matches top-performing humans 82% of the time.

The big picture: Whether you view these shifts as opportunity or disruption, Mollick’s benchmark data provides the clearest picture of where AI capability stands today and how fast the gap is closing.

Meta Postpones Frontier Model Over Performance Gaps

Estimated read time: 9 min

Illustrating the challenges Mollick describes, Meta postpones its Avocado frontier model after internal testing revealed it failed to match performance benchmarks set by Google Gemini 3.0 and OpenAI’s latest models. The launch is pushed to at least May 2026, highlighting post-training refinement challenges.

Market signal: The rising competitive bar means even massive compute budgets can’t guarantee frontier performance. Post-training refinement and alignment are emerging as the true differentiators, a trend worth watching for anyone selecting models for production.

AI Did Not Simplify Software Engineering

Estimated read time: 6 min

Continuing our coverage of Turkovic’s “easier code, harder engineering” thesis[1] from last week, Rob Englander adds a technical lens, arguing that AI coding tools haven’t simplified the real challenges: architecture, system behavior design, and managing complexity over time. He identifies spec drift as a growing risk where AI generates code faster than teams can maintain alignment between specs, tests, and implementation.

[1] AI Eased Code Writing but Strained Engineering

The insight: “Spec drift” names something many teams are already experiencing but haven’t articulated. Faster code generation without faster specification work creates a new category of technical debt.

Anthropic Launches Institute for AI Societal Impact

Estimated read time: 5 min

Addressing these systemic concerns, Anthropic launches The Anthropic Institute, focused on how powerful AI will reshape jobs, economies, and governance. Led by co-founder Jack Clark, it unifies Frontier Red Team, Societal Impacts, and Economic Research. New hires specialize in AI law and transformative economics.

The signal: Anthropic investing in dedicated societal impact research signals a maturing industry. The focus on AI and rule of law, economic reshaping, and red-teaming means policy decisions will increasingly be data-driven.

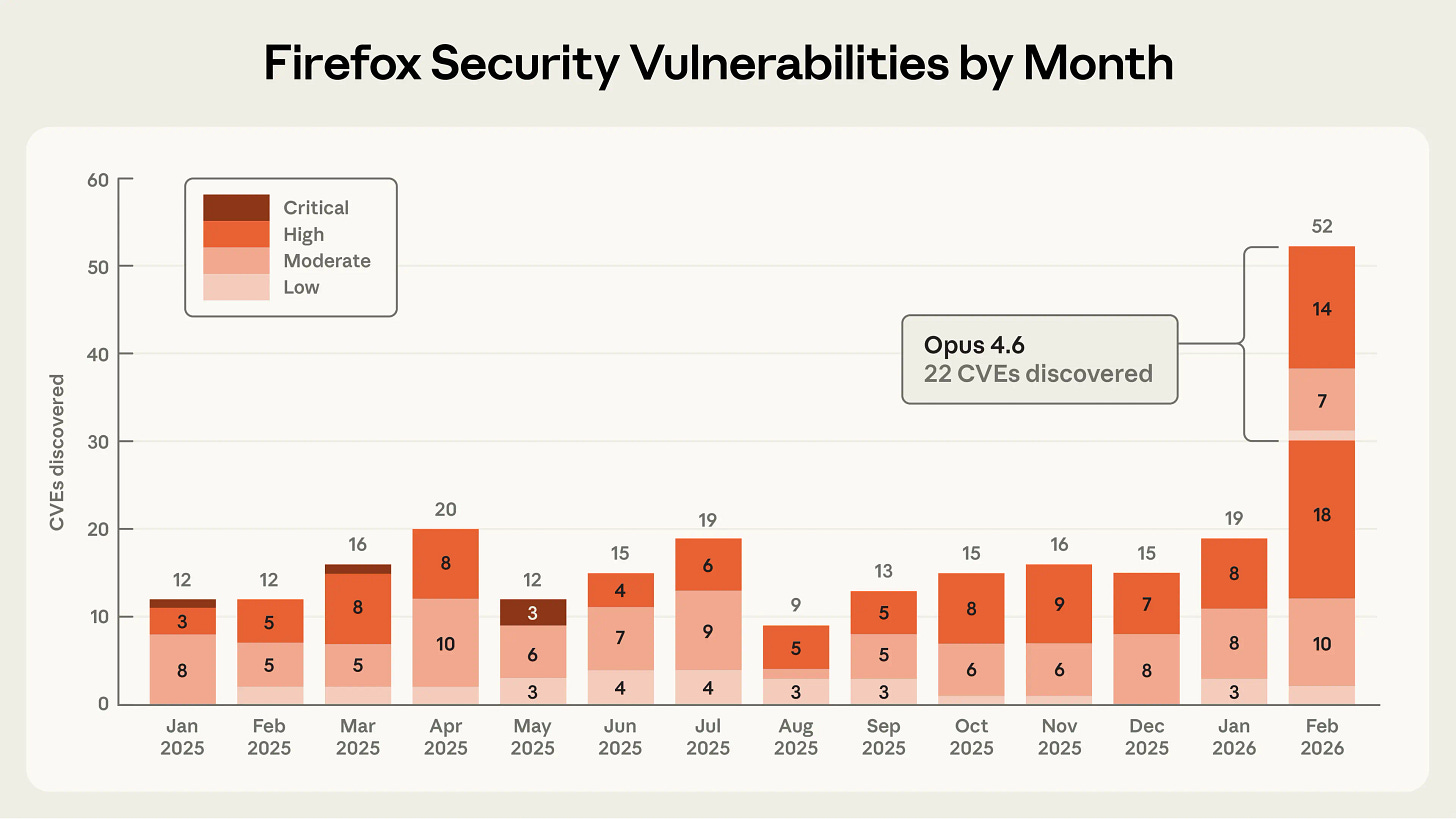

Claude Finds 22 Firefox Vulnerabilities in Two Weeks

Estimated read time: 6 min

Also from Anthropic, Claude Opus 4.6 discovered 22 vulnerabilities in Firefox over two weeks, with Mozilla classifying 14 as high-severity. The AI found a Use After Free flaw in twenty minutes. Crucially, vulnerability discovery costs far less than exploitation, giving defenders a current advantage.

For security teams: The asymmetry between discovery cost and exploitation cost currently favors defenders. AI-assisted vulnerability scanning is becoming a practical force multiplier right now.