Weekly Review: Agents Grow Up

The week's curated set of AI tutorials, tools, and perspectives on the maturing agent ecosystem

Welcome to this week’s Altered Craft weekly AI review for developers. We’re grateful you keep showing up every Monday. This edition has a clear throughline: coding agents are leaving the experimental phase and entering the era of engineering discipline. You’ll find new patterns from Simon Willison, security frameworks from Vercel and NanoClaw, and a provocative argument from Amp that the coding agent itself is dead. Karpathy closes us out with a rallying cry that ties it all together: Build. For. Agents.

TUTORIALS & CASE STUDIES

Agentic Engineering Patterns Beyond Vibe Coding

Estimated read time: 5 min

Simon Willison launches an evergreen guide documenting agentic engineering patterns for professionals using coding agents, explicitly distinguishing this discipline from “vibe coding.” The first two chapters cover how cheap code generation reshapes workflows and how Red/Green TDD helps agents produce reliable output.

The context: As coding agents become standard tooling, having a shared vocabulary and proven patterns prevents teams from reinventing workflows. Willison’s guide format means it will evolve alongside the rapidly changing landscape.

OpenAI’s Codex Prompting Guide for Agentic Coding

Estimated read time: 13 min

Putting those patterns into practice, OpenAI’s guide details prompting strategies for GPT-5.3 Codex in agentic workflows. Key techniques include parallelizing tool calls, batching file reads, and treating the model as an autonomous engineer rather than a conversational assistant.

The takeaway: Even if you don’t use Codex, the prompting philosophy here applies broadly. Bias toward action, batch operations, and minimize status updates to get the most from any coding agent.

Which Web Frameworks Save AI Agents Most Tokens

Estimated read time: 9 min

With agents writing more code than ever, framework choice matters. This benchmark of 19 web frameworks found minimal options like ASP.NET and Express consumed roughly 26k tokens per build, while Phoenix required 74k. Notably, feature additions cost similarly across all frameworks, suggesting overhead primarily impacts initial scaffolding.

Why this matters: If you’re running agents at scale, a 2.9x token gap between frameworks translates directly to cost. Choosing a minimal framework for agent-generated scaffolding is a simple optimization.

Five Security Patterns for AI Agent Architectures

Estimated read time: 9 min

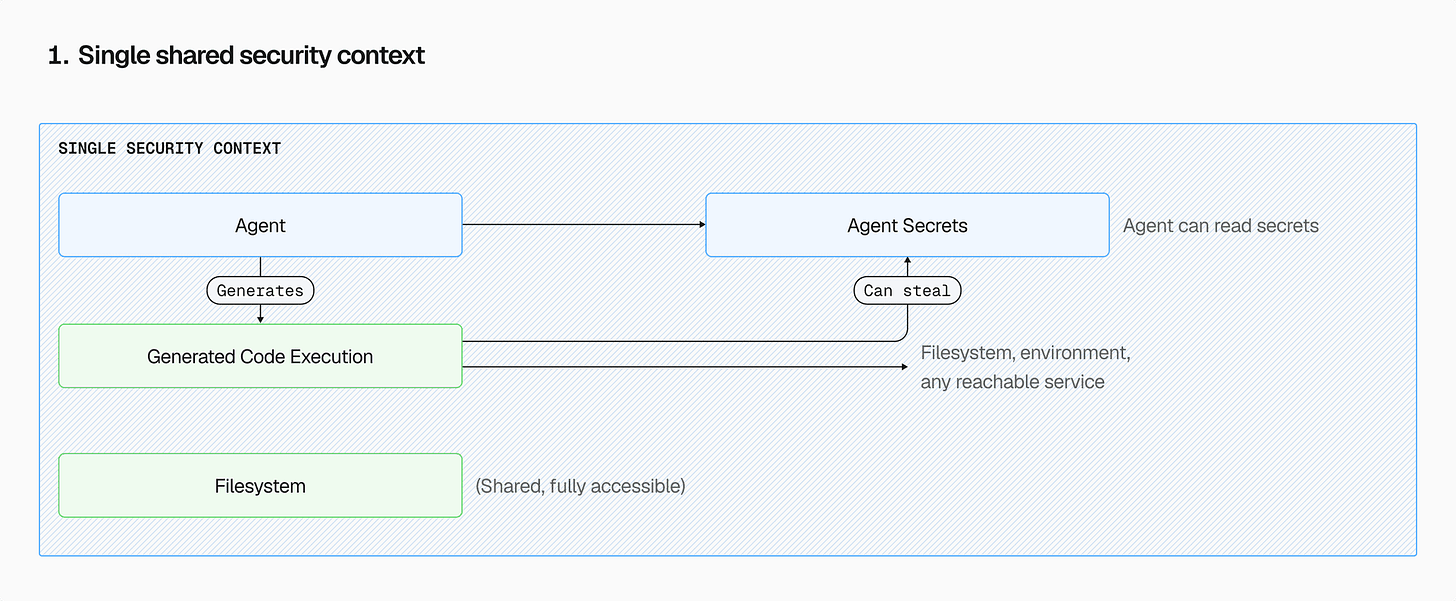

As agents gain deeper access to infrastructure, Vercel maps five progressive security patterns, from zero boundaries to fully separated compute with credential injection. The analysis recommends isolating agent harness from generated code execution as the minimum viable production architecture for teams deploying autonomous coding agents.

Worth noting: Multiple teams are converging on the same conclusion this week: treat agent-generated code as untrusted by default. This piece gives you a concrete maturity model for your own security posture.

NanoClaw’s Distrust-by-Design AI Agent Security Model

Estimated read time: 8 min

Taking that containment philosophy further, NanoClaw treats AI agents as inherently untrusted through three layers: per-agent container isolation, mount allowlists, and fresh containers destroyed after each invocation. At 3,000 lines versus OpenClaw’s 400,000+, the architecture prioritizes simplicity to minimize attack surface.

Key point: The 3,000 vs. 400,000 line comparison is striking. In agent security, a smaller codebase you can actually audit may be more secure than a comprehensive one you can’t.

TOOLS

Helm: Two Tools Replace Dozens for AI Agents

Estimated read time: 8 min

Helm is a typed TypeScript framework that collapses agent tool sprawl into two operations: search and execute. Agents discover capabilities on demand, sandboxed via SES with granular permissions. Inspired by Cloudflare’s 99.9% token reduction through search-plus-execute architecture, it ships with filesystem, git, and shell skills.

What this enables: Instead of registering dozens of tools that bloat context windows, you give agents two generic tools and let them discover what they need. A compelling pattern for any agent framework.

Amp Abandons Editor Agents for CLI-First Approach

Estimated read time: 6 min

Also rethinking how agents interact with codebases, Amp discontinues their VS Code and Cursor extensions to go all-in on CLI. Their argument: with GPT-5.3-class models, the agent wrapper is no longer the bottleneck. The real constraints are now how organizations structure codebases and workflows for agents.

Why now: This signals a broader industry shift. As model capabilities outpace tooling, the competitive advantage moves from clever agent wrappers to how you organize your codebase for AI consumption.

Garry Tan’s Structured Plan Review Framework

Estimated read time: 5 min

Y Combinator’s Garry Tan shares a plan-exit-review skill that structures code evaluation into four phases: Architecture, Code Quality, Tests, and Performance. It begins with a scope challenge, then walks through opinionated recommendations with concrete tradeoffs rather than neutral option menus.

The opportunity: Drop this directly into your Claude Code or Codex workflow as a reusable skill. The scope challenge step alone can save hours by preventing over-engineering before a single line is written.

Free Claude Max for Open Source Maintainers

Estimated read time: 3 min

On the Anthropic side, Claude for OSS offers six months of free Claude Max to open source maintainers. Eligibility targets repos with 5,000+ stars or 1M+ monthly NPM downloads, though maintainers of quietly essential ecosystem dependencies can also apply.

What’s interesting: Anthropic is explicitly welcoming maintainers who don’t hit the star count but keep critical infrastructure running. If that describes your work, the application is worth five minutes.

Continue Local Claude Code Sessions from Any Device

Estimated read time: 9 min

Also from Anthropic, Remote Control lets you continue local Claude Code sessions from phones, tablets, or any browser. Your session stays on your machine with full filesystem and MCP access. Web and mobile interfaces serve as windows into the local session, with automatic reconnection.

What this solves: Start a long-running agent task at your desk, walk away, and check progress from your phone. Eliminates the “is it done yet?” loop that interrupts deep work.

Vercel Chat SDK Unifies Cross-Platform Bot Development

Estimated read time: 4 min

Vercel releases Chat SDK, a TypeScript library for writing bot logic once and deploying across Slack, Teams, Discord, GitHub, and Linear. Features include type-safe event handlers, JSX cards that render natively per platform, and built-in AI SDK streaming for real-time responses.

The practical win: If you’ve built separate Slack and Discord integrations for an AI assistant, you know the pain. Write your bot once with native UI on every platform.

Nano Banana 2 Brings Flash Speed Image Generation

Estimated read time: 4 min

Shifting from code to pixels, Google introduces Nano Banana 2, combining professional-grade image generation with rapid processing. The model features advanced world knowledge, consistent subject representation, and production-ready specifications at flash speed for applications that need quality without sacrificing latency.

Why it’s relevant: Speed is the bottleneck for image generation in production apps. Flash-speed processing with pro quality opens up use cases that were previously too slow for real-time user experiences.

NEWS & EDITORIALS

Hiring Engineers When AI Writes the Code

Estimated read time: 6 min

Tolan shares their redesigned engineering interview where candidates build solutions using AI assistants like Claude and Cursor. The key insight: AI has not changed what distinguishes great engineers. What matters is architectural judgment over implementation speed, recognizing trade-offs, and understanding every line you ship.

The career signal: Whether you’re hiring or interviewing, this reframes the conversation. AI makes judgment and communication more valuable, not less. A reassuring data point for senior engineers navigating the shifting landscape.

OpenClaw Creator: Be More Playful Building AI

Estimated read time: 5 min

On the builder side, Peter Steinberger, creator of the viral AI agent OpenClaw, advocates for a less rigid approach to development. His core advice: be more playful and allow yourself time to improve, letting experimentation and iterative refinement drive better outcomes.

What’s refreshing: In a space dominated by optimization talk, this is a reminder that the best agent builders are treating this moment as a creative frontier, not an engineering sprint.

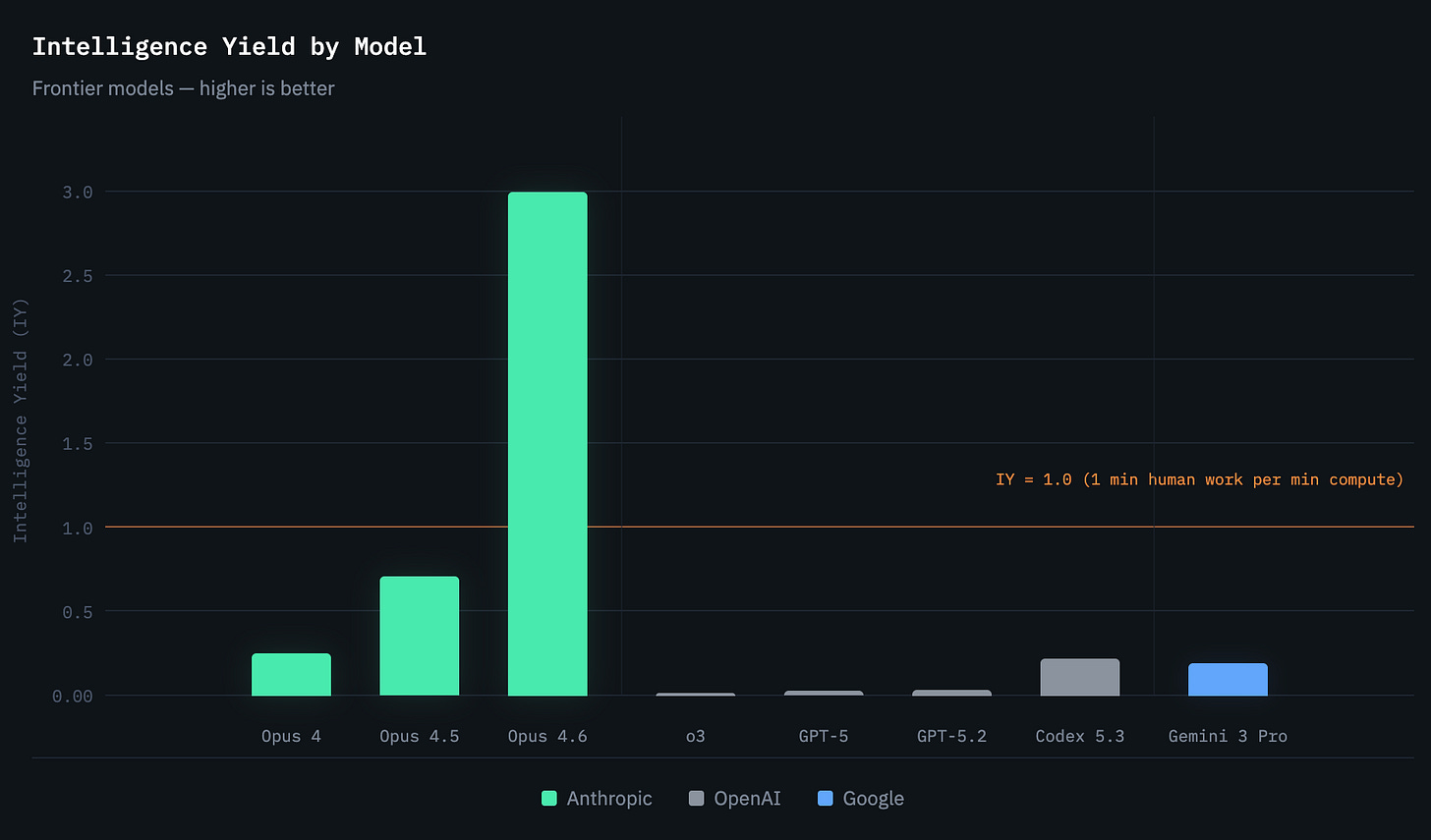

Intelligence Yield Reframes How We Benchmark Models

Estimated read time: 5 min

Shifting from people to models, Intelligence Yield (IY) measures how efficiently AI models convert compute into useful work. Using METR benchmark data, the framework tracks which frontier models solve harder tasks more reliably with less compute, with IY=1.0 marking parity with human work rate.

The shift: As you choose between models for agent workflows, raw capability benchmarks tell only half the story. IY gives you a cost-efficiency lens for comparing what you actually get per dollar of compute.

Build for Agents: Karpathy on CLI-First Products

Estimated read time: 3 min

Andrej Karpathy argues CLIs are ideal for AI agents because they are “legacy” technology agents can natively use. He demos Claude building a Polymarket dashboard in three minutes, then challenges: does your product offer markdown docs, Skills, CLI access, or MCP integration?

The rallying cry: “Build. For. Agents.” Karpathy’s checklist of markdown docs, Skills, CLI, and MCP gives you a concrete framework for making any product or service agent-accessible today.