Token Anxiety

What unused credits are really telling you

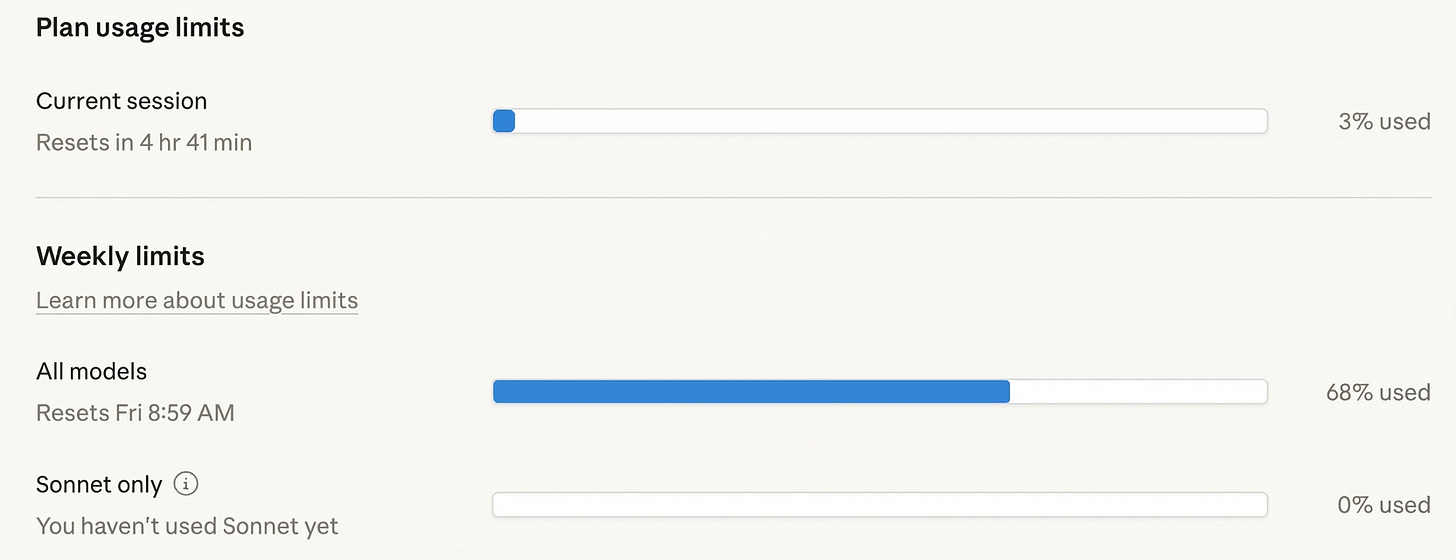

It’s Thursday. My Claude Max usage resets tomorrow morning, and I’m staring at the weekly dashboard.

Sixty-eight percent. I start running the math on what those remaining credits could have built. Not because I need anything built. Because leaving them on the table feels like waste.

I have a gaming laptop sitting on my desk. I bought it during COVID for exactly the reason you’d expect. It hasn’t run a game in months. Now I look at it and think: that GPU should be running local models. It should be doing something. It should be working while I sleep.

As I review this draft, I pause every few minutes. A coding agent working on a bug fix in a project chimes in, requesting feedback. I switch contexts, review its output, give it another task, and come back to writing. The irony isn’t lost on me. I’m writing about the pressure to always have something running while I literally have something running.

One name I’ve been using for this feeling is token anxiety. The persistent, low-grade pressure that you should be doing more with AI tools. Not because you have a specific need, but because the capacity is there and you’re not using it. The term is still settling out, but the feeling is precise.

If this sounds familiar, you’re not imagining it. And you’re not alone.

You’re Not the Only One

Something shifted at the start of this year. Agents got meaningfully more capable of working on larger tasks for longer stretches. Open-source agent tools went viral, and I won’t even get into OpenClaw. The infrastructure for running AI autonomously became accessible to anyone willing to set it up.

And the psychological effects followed immediately.

TechCrunch reported in February that the first signs of burnout are coming from the people who embrace AI the most. Not the skeptics. Not the holdouts. The enthusiasts. The people who leaned in the hardest are the ones hitting the wall.

Harvard Business Review coined the term “brain fry” to describe the cognitive strain from managing multiple AI agents and constant tool switching. Users describe a buzzing feeling, mental fog, difficulty focusing. The tools were supposed to free up mental bandwidth. For many, they’re consuming more of it.

In my own community, Portland AI Engineers, the pattern is visible. The conversations have shifted from “what can I build with this?” to “how do I keep up with all of it?” The excitement is still there, but it’s threaded with something more anxious.

It’s Not About the Tokens

Here’s where I think most of the current conversation gets it wrong. The articles about token anxiety tend to frame it as a cost problem. Watch your spend. Set budgets. Optimize your prompts. That’s useful advice, but it misses the point.

When I looked at those unused Claude Max credits and felt a pang, that wasn’t about twenty dollars. It was about the gap between who I am and who I feel I should be now that the tools have made so much more feel possible.

That’s an identity question, not a budgeting question.

The pressure to do more with the time you have isn’t new. Every developer has felt it. I remember Red Bull-fueled coding marathons during my startup days, grinding through weekends convinced that more hours meant more progress. At some point, I was introducing more bugs than features.

The guilt of “I could be doing more” existed long before agents. What’s changed is the scale. AI dramatically expanded what’s possible in a given hour, and that expansion is still accelerating. The gap between what you’re doing and what you could theoretically be doing has never been wider.

Now an agent can work while you sleep. A local model can run on that idle GPU. The ceiling moved upward, fast, and you’re measuring yourself against it. Every hour without something running starts to feel like an hour wasted. Not because it is. Because it could have been something.

This is the anxiety of unrealized potential. The weight of everything you could be doing but aren’t. When AI was less capable, the menu of what you could build was finite and manageable. Now it essentially feels infinite, and every new capability adds another option you’re choosing not to pursue.

That internal pressure would be hard enough on its own. But you’re not experiencing it in a vacuum. As AI lowers the barrier to building, more people are building visibly. Your feed is full of developers shipping projects, launching tools, posting demos. Research on FOMO consistently shows that social comparison drives anxiety and compulsive behavior. A culture that already valued output and shipping is especially susceptible. So the weight of what you haven’t done meets a constant stream of evidence of what others apparently have.

That’s the two-front pressure: internally, an infinite menu of things you could be pursuing; externally, a highlight reel of people who seem to be pursuing all of it.

We’ve Been Here Before

If you’ve been in this industry for more than a few years, this pressure has a familiar shape. Early cloud adoption brought a similar anxiety. You’d watch competitors spin up infrastructure and feel the pull to migrate everything, immediately, before you fell behind. Containers and Kubernetes created another wave. Everyone needed to be containerized. Everyone needed orchestration. The ones who didn’t felt like they were falling behind.

In hindsight, the teams that adopted everything fastest weren’t necessarily ones that fared best. They carried the usual operational burdens plus the pain of early-stage tooling, constant up-skilling, and undocumented edge cases. The teams that often fared better were the ones who chose deliberately: which problems actually warranted new approaches, and which were fine with proven solutions.

That pattern is relevant now, but this time is different in scale and in kind. Previous transitions asked you to learn a new tool or platform. You learned it, it stabilized, you moved on. This one is an entirely new abstraction layer: agents, prompts, context management, memory, tool orchestration. It evolves monthly. What you learned about agents three months ago may already be partially obsolete. And unlike any previous wave, the tools work without you. Cloud and containers were things you operated. Agents operate independently.

On top of that, the friction to go deep on something new has nearly vanished. You can spin up a working prototype of almost anything in an afternoon. That’s genuinely powerful, and it’s exactly why choosing where to go deep matters more than ever. The surface area of “things you could learn” grew by an order of magnitude in about six months. No single person can cover all of it, and trying to is the fastest path to the anxiety we’re talking about.

What I’m Trying

I don’t have this figured out. I’m writing about it partly because writing is how I process, and this is something I’m actively processing.

My situation makes this particularly acute. As a full-time AI researcher and writer, I’m hyper-exposed to every new advance. I see it all, every day. And I come from a software engineering background, which means my instinct when I see something interesting is to build with it. That combination is a recipe for constant distraction disguised as diligence. Every new technique, every new tool, triggers the same impulse: I should try this. The discipline I’m still developing is protecting time for the research and writing that are actually my job, which means being very deliberate about what I choose to build.

The practice I’ve landed on is still evolving. I use Obsidian as my second brain. It’s all simple markdown files, which makes it inherently agent-friendly. Inside it, I curate my short and medium-term career goals. I don’t pretend to know what I’ll be doing in the long term, so I don’t plan for it. I use Claude Code to brainstorm against those goals, pressure-test my priorities, and reinforce my direction when the noise gets loud. The process is never finished. I continually simplify it, strip out what isn’t working, and let it evolve.

The effect is that when something new and shiny lands, I have something to check it against. Not a rigid framework, but a set of compass goals that help me ask: does this serve what I’m actually working toward, or does it just feel like something I should try?

Sometimes the answer is: not right now. And that’s the hardest part. Deliberately choosing to say no to something interesting, not because it’s bad, but because it doesn’t align with where you’re headed. What helps me is giving myself a hedge: bookmark it, set it aside for three or four weeks, then revisit. If it still matters after that window, the community has had time to vet it, documentation has improved, and you’ll learn it faster with better signal. If it’s faded, you saved yourself a detour. Either way, a few weeks doesn’t put you behind. The anxiety says otherwise, but the anxiety is wrong about this.

Your version of this will look different, but the underlying loop is the same. Define your compass goals, even loosely. When new tech appears, check it against those goals. Go deep only where it serves them. Periodically reassess the goals themselves, because the landscape shifts and your direction should be allowed to shift with it.

The Goal Isn’t More Tokens

If you’re feeling the pull I’ve described here, I want to leave you with this: you’re not behind. The anxiety is real, it’s widespread, and it’s hitting the most engaged practitioners the hardest. That last part is important. Feeling this way is not evidence that you’re doing something wrong. It’s probably evidence that you care about doing it right.

The goal isn’t to use more tokens. It isn’t to have agents running around the clock. It isn’t to repurpose every piece of hardware in your house into an AI box (though I’m still eyeing that laptop).

The goal is intention. High-level compass goals have always been good practice, but they’ve never been more important than in a moment when the friction to build has nearly disappeared. Knowing why you’re spending the tokens you spend. Choosing where to invest instead of feeling guilty about what you’re passing on. Having a direction, even a rough one, that lets you evaluate opportunities against your actual goals instead of against an impossible standard of doing everything.

The tools made so much more feel possible. That’s genuinely exciting. The work is making sure your default is to review, not simply react.